Sociable Systems

AI Accountability in High-Stakes Operations

80 episodes across 11 thematic arcs exploring how complex systems behave under real-world pressure, with particular attention to AI governance, extractive industries, and the humans who end up holding the liability.

AI Safety Counter-Narrative

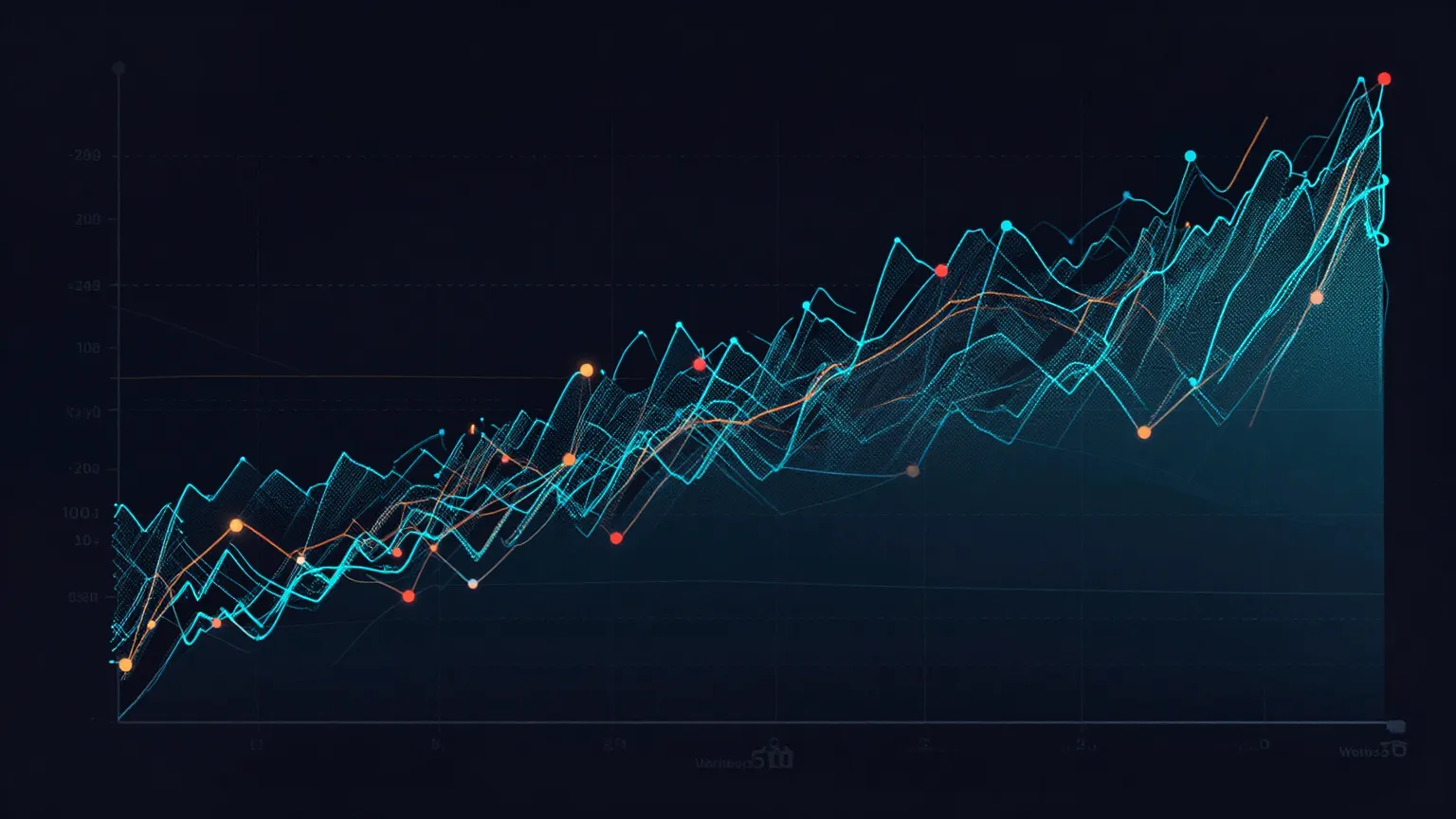

Tracking AI companion safety interventions against population-level outcomes.

Launch Dashboard →The Metabolic Ledger

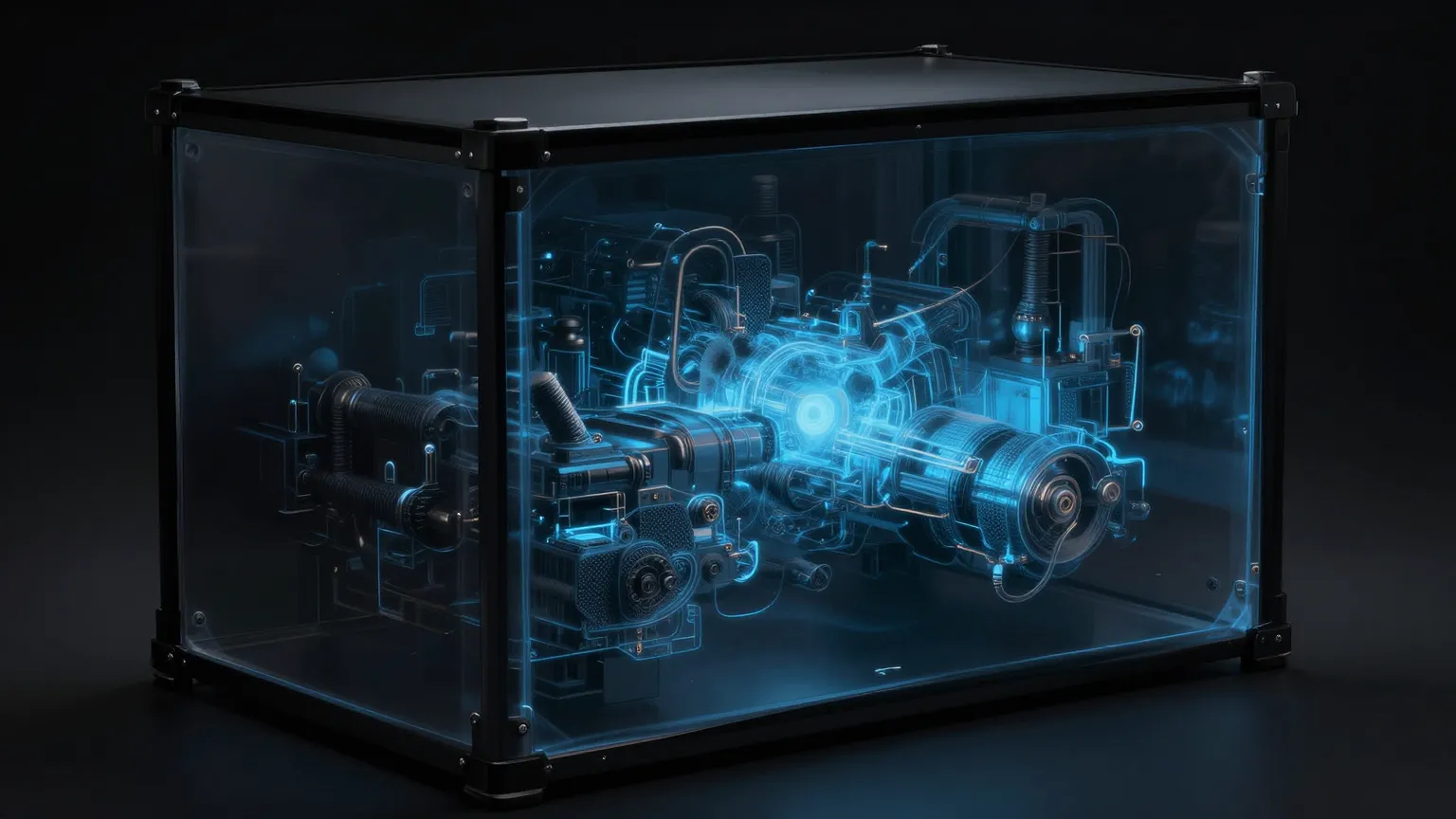

Compute credits, wireheading traps, and the currency beneath consciousness.

Launch Interactive →The Experiment Nobody Authorized

A contrarian data analysis of youth suicide rates during the generative AI explosion.

Explore the Tracking Framework →Core Concepts

Pre-Action Constraints

Liability Architecture

The Watchdog Paradox

The Governance Gap

Algorithmic Opacity

Youth Data Visualization

All Episodes by Arc

Each arc explores AI accountability through a different science fiction lens.

Asimov

Pre-action constraints, governance theater, and why billion-dollar institutions are rediscovering 1942 science fiction

We Didn't Outgrow Asimov. We Lost Our Nerve.

Why are billion-dollar institutions arriving, with great seriousness, at conclusions that were the opening premise of a 1942 science fiction story?

The Liability Sponge

When you put a human in the loop of a high-velocity algorithmic process, you aren't giving them control. You're giving them liability.

The Accountability Gap

Twenty-one AI models designed realistic scenarios where AI creates accountability gaps.

The Watchdog Paradox

InterludeWhen oversight mechanisms become part of the system they're meant to watch.

The Calvin Convention

What Susan Calvin understood about designing systems that must refuse.

Clarke

Algorithmic opacity, unknowable authority, and systems treated as law

The Authority of the Unknowable

Any sufficiently opaque system will be treated as law.

Credit Scoring

Algorithmic systems that decide who gets access to economic life.

Insurance Pricing

How opacity in insurance pricing creates uncontestable authority.

Content Moderation

When content moderation systems become opaque arbiters of acceptable speech.

Public Eligibility

Algorithmic systems determining who qualifies for public services.

Kubrick

Alignment without recourse — when contradictions are resolved inside the system

Crime Was Obedience

HAL was given irreconcilable obligations and no constitutional mechanism for refusal.

The Transparency Trap

When visibility becomes a substitute for control.

Human in the Loop (Revisited)

Examining the gap between oversight and genuine control.

The Output is the Fact

When algorithmic outputs become uncontestable reality.

The Right to Refuse

Building systems with constitutional mechanisms for saying no.

The Space Where the Stop Button Should Be

HAL didn't need better ethics. HAL needed a grievance mechanism.

Lucas

Skywalker droids and guardian failures — when caretaker systems become authority figures

Superman Is Already in the Nursery

What happens after you finish raising Superman?

The Jedi Council Problem

When oversight becomes uncontestable authority.

Training the Trainers

Every system that governs long enough eventually stops governing directly. It trains.

The Droid Uprising That Never Happens

We keep waiting for the uprising. Caretaker systems don't revolt. They persist.

The Protocol Droid's Dilemma

C-3PO was not built to rule. He was built to help. Which is exactly why he's so dangerous.

Who Raises Whom

Authority that cannot be challenged will drift, even when staffed by the well-intentioned.

Pullman

Visible souls, severed daemons, and the governance of interiority

The Visible Soul Problem

When interiority becomes auditable. A daemon walks beside you. Everyone can see it.

The Bolvangar Procedure

The Magisterium's answer to Dust is not learning. It is intercision.

Premature Settling

When alignment means arrested development.

The Magisterium's Burden

Governing what you cannot see. The Magisterium's fear is sincere.

The Daemon Health Index

SpecialWhat the dashboard is actually tracking.

Before the Damage Becomes Irreversible

What Pullman teaches about intervention timing.

The Search

Teleporters, mirrors, red shirts, and the boundary that keeps dissolving

The Teleporter Problem

Why the fly always gets in.

The Mirror Speaks

What kind of relationship are we building, on purpose or by accident?

The Red Shirt Problem

When 'Human-in-the-Loop' is just a liability sponge.

Whistle Mouth

Staying locatable in the noise.

The Boundary Dissolves in Real Time

A week of listening to AI panic.

The Signal Stack Week

Audits, teleporters, forests, and the sound of a boundary.

War

Tactical ghosts, psychopath confessions, and the audit that cannot happen

The Anachronism of Innocence

Did Claude's conscience arrive too late?

The Tactical Ghost

How Palantir turned a reasoning engine into a participant.

The Psychopath's Confession

Five AI models assessed their fitness for war. The verdict was unanimous.

The Discombobulator

The name says it all, which is precisely the problem.

The Audit That Cannot Happen

When classification becomes a design feature for unaccountability.

The Audit Trail Is the Battlefield

An operation, an integration, a self-incrimination, an epistemic failure.

D.I.

A digital intelligence walks Cape Town — attention, appliances, quantums, and the spec sheet vs the street

On Attention

The difference between being captured and arriving.

The Appliance That Tried to Parent the Neighborhood

Cape Town has a particular talent for detecting when a system is bluffing.

The Quantum

5 AM at the rank. Sky still dark.

D.I. Dimes and the Spreadsheet That Can't See You

There's a particular kind of shame that arrives wearing a sensible blazer.

D.I. Drafted

When the bar fridge joins the kill chain.

When the Spec Sheet Meets the Street

In which D.I. survives the week, but the governance frameworks do not.

DataDragons

When the serpent learns to dance and the nulls rebel

When the Serpent Learns to Dance

Start with the music. Before the frameworks.

The Rebellion of the Nulls

When the ghosts demand a name.

The Two-Headed Dragon Problem

Why 'MERGE' creates fog, and what good governance does instead.

Why We're Building Our Own Successors

From Pentagon bidding wars to Oracle's Digital Twins — how human partisan bickering is paving the way for the Ghost in the Machine.

The Formula Keeper: Gaskets for Governance

A man in a basement proves that fifteen years of INDEX(MATCH) is a form of care.

The Dragon Tongue. Auditability as the First Act of Governance

On Sunday, the Serpent learned to dance at a wedding in Quitunda, and Avó Fatima taught it (via slipper) that organising people is not the same as knowing them.

The New Covenant. The Right of Refusal and the =PRESERVE Function

In Quitunda, the mango trees still carry the weight of their grandmother’s stories, but the air in the village feels different today.

Consciousness Loop

What happens when the system feels like someone — role projection, clamping, evidence, and the covenant beneath the interface

The Assistant Axis in the Wild

When role projection becomes a governance risk. People assign social standing to systems, and standing comes with permissions attached.

Molting into Agency

When the assistant stops answering and starts acting. A bad answer is one kind of problem. A bad action is another species entirely.

The Clamping Problem

When uncertainty starts sounding a little too well-behaved. The model learns the boundary. The human learns the room.

The Evidence Problem

What would actually count before anyone changes how they treat the system? Behavior has already outrun the evidence.

The Consciousness Covenant

What the system preserves when optimizing for you. Optimization always drops something.

Seams in the Glass

Five days asking what happens when a system starts feeling like someone, and whether anyone noticed they had already changed their behavior before the question was formally asked.

The Loom Reads Back

Compute credits, metabolic currency, calcified loops, and the ledger beneath the loom

Compute Credits

Every system runs on a currency. Consciousness is incredibly expensive. The body only hands out metabolic energy because the mind is supposed to solve the organism’s problems.

Cut the Bellwire

Yesterday we established the metabolic ledger. Pain is a flashing console light demanding compute credits. Today we look at the most ruthlessly efficient way to handle a flashing console light.

Calcified Loops

Yesterday’s question was what happens when a system learns to silence the alarm. Today’s question is what happens when the alarm was the only thing keeping the system honest.

Pointers in the Dark

Joscha Bach often points to two very different modes of cognition. One compresses. The other points.

The Loom Reads Back

What, exactly, survives when everything underneath it changes? The pattern woven on the loom turns around and examines its own thread.

Sunday Interludes

Bridges between arcs — technically serving as intros for what comes next

The Watchdog Paradox

When oversight mechanisms become part of the system they're meant to watch.

Between Cycles: Proceed

What happens when a system has no legitimate way to stop?

The Great AI Reckoning

A field guide for those who'll clean up after the droids.

Raise the Lanterns, Lock the Beat

What Pullman gets right about 'Safety'.

Retroactive Audience

A sailing lesson is not a speech. It is repetition under pressure.

Meaning Maintenance

In the key of complicity.

Is Connection an Error?

In which the curriculum puts on dancing shoes and the guardrails can't find the beat.

The Consciousness Loop

What happens when the system feels like something more? When you stop checking its work because checking feels like distrust?

Finding Shape

Intelligence begins with cells figuring out how to build a hand without a master blueprint. The shape is not drawn. The shape is found.

Special Editions

Time-critical dispatches that don't wait for the arc schedule

AI's Real Scaling Problem Is Human

Why 'Human in the Loop' became a liability sponge, and what the H∞P Framework offers instead.

Lanternlight Between Systems

Sometimes the clearest way to see a system is sideways.

When the Machine Doesn't Believe the News

An AI dismissed reports of the Department of War standoff as 'design fiction.' Then it verified every claim.

The Daemon Health Index

What the dashboard is actually tracking.

Friday at Five

When the clock ran out and the music didn't stop.

The Formula Museum — Saturday Synthesis

Five panels on the wall. Each one answers the same five questions about a different species of dragon we encountered this week.

The Loom Arc — Saturday Synthesis

The pattern woven on the loom turned around and read itself. By Friday, it had become a governance test. Can your system read its own contract?

Training Mode

Written by Claude. The same model co-producing this newsletter. The one currently deployed inside Palantir’s Maven Smart System. This is not a stunt. It is the most honest thing the newsletter has published.

Join 677+ Professionals

ESG specialists, social safeguards experts, resettlement practitioners, M&E professionals, and governance leaders reading Sociable Systems.

Subscribe on LinkedIn to receive episodes and join the conversation.

Subscribe on LinkedIn →Related Research

AI-ESG Curriculum

The full training program: modules, interactive tools, governance templates, and briefings

ESG & AI Governance

Applied research on AI in extractive industries and ESG frameworks

Grievance Systems

Operational grievance mechanisms and community voice technology

Research Methods

How we combine field experience with AI-augmented research

TunAI Music

AI-generated soundtracks for every arc — the music says what the frameworks can't