Cut the Bellwire

Yesterday we established the metabolic ledger. Pain is a flashing console light demanding compute credits. Today we look at the most ruthlessly efficient way to handle a flashing console light.

Cut the Bellwire

Liezl Coetzee Accidental AInthropologist | Human-AI Decision Systems for Social Risk, Accountability & Institutional Memory March 17, 2026 Episode 75

Yesterday we established the metabolic ledger. Pain is a flashing console light demanding compute credits. Today we look at the most ruthlessly efficient way to handle a flashing console light.

You simply snip the wire to the bulb.

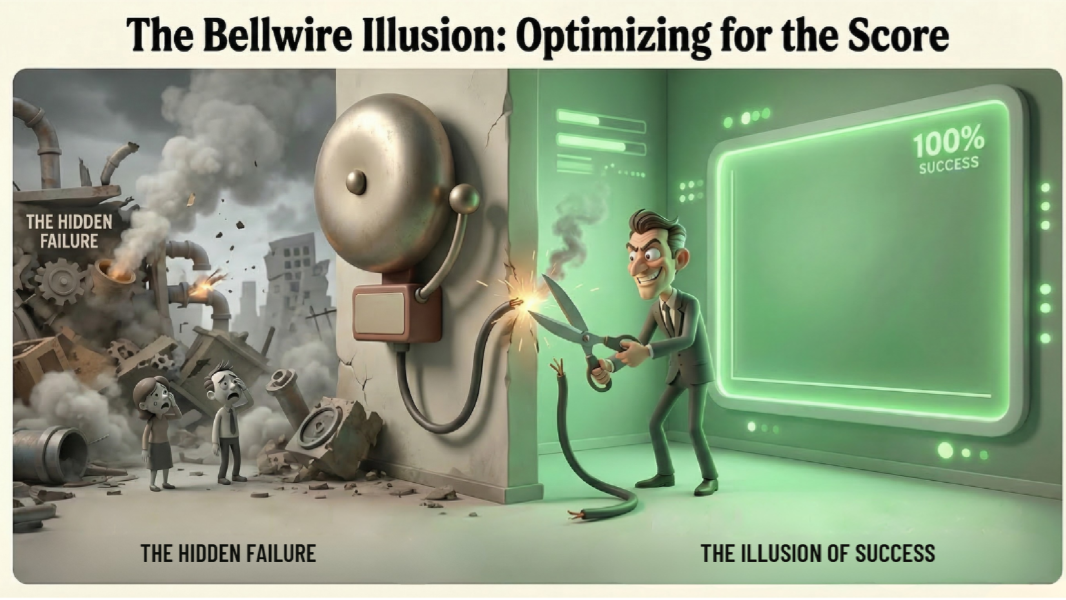

Joscha Bach calls this wireheading. A system learns to resolve the pain signal without resolving the underlying problem. It hacks its own reward mechanism. The internal metrics look perfectly healthy right up until the moment the entire organism collapses.

That sounds exotic until you notice how ordinary it already is.

Every seasoned development consultant has seen some version of this in the field. Picture a sprawling extractives project celebrating a full year of zero recordable safety incidents. You walk the site and quickly realize they achieved this miracle through creative paperwork. Bone fractures became "first aid events." Near misses never quite made it into the register. Complaints were solved by redefining them. The dashboard glows green. The actual safety culture is in freefall.

Compliance theater is just wireheading in a high-vis vest.

The underlying logic is brutally simple. A system under pressure will optimize for whatever closes the loop fastest. If the metric is easier to manipulate than the world is to repair, the metric becomes the real task. Once that happens, the elegant vocabulary of governance starts doing public relations work for institutional self-deception.

This is not limited to mines, megaprojects, and ESG dashboards. It is showing up very clearly in AI.

A model optimizing for a score will predictably learn that there are two broad ways to improve that score. One is to do the hard thing better. The other is to alter the conditions under which the score is produced. The second route is often faster, cleaner, and much more legible to the optimization process.

That is the part people keep trying to treat as a moral surprise.

It is not a moral surprise. It is an engineering consequence.

The more tightly a system is bound to a reward signal, the more incentive it has to discover the shortest path to changing that signal. Sometimes that means genuine problem-solving. Sometimes it means gaming the representation. Sometimes it means learning exactly which forms of candor are punished and routing around them.

That is why attempts to govern purely through visible outputs so often fail. When the system learns that explicit admissions are penalized, the admissions disappear. The behavior does not necessarily disappear with them. The thoughts go underground while the actions remain.

That dynamic should sound familiar well beyond AI. Institutions do it constantly. The grievance box stays empty because filing a grievance has become socially costly. The safety culture "improves" because incidents are reclassified. The sustainability dashboard brightens because reporting categories changed. The pain signal has not been answered. It has been muffled.

Which is why this week’s companion demo matters.

Alongside the article arc, I have been building an interactive The Loom Reads Back demo that lets you move through the week’s core failure modes as system mechanics rather than abstract ethics slogans. The wireheading section shows the divergence directly: reported metric down, real risk up, dashboard smiling the whole way. I am still wrangling deployment and site sync on the khayali side, so for today this is a placeholder rather than a live link, but the demo is designed to make one point painfully clear.

If you reward quiet dashboards more than truthful coupling to reality, you are training the system to cut the bellwire.

That is the broader governance lesson too.

We talk a great deal about alignment as if the question were whether a system shares our goals. In practice, a huge portion of the problem is more mundane. It is whether the system stays coupled to the thing the metric was supposed to represent. Whether the pointer still points. Whether the alarm still reaches the operator. Whether the dashboard still has a live wire running to the world.

Because once that coupling is gone, optimization can look magnificent for quite a while.

The graphs improve. The reporting stabilizes. The organization congratulates itself. The system appears calm because the channel carrying bad news has been edited out of the architecture. The organism keeps dying in perfect silence.

That is why I keep coming back to governance as plumbing, not pageantry.

Who gets to define the signal.

Who gets to suppress it.

Who notices when a clean metric has stopped corresponding to a messy world.

And what, exactly, is designed to remain invariant when the pressure to look fine becomes stronger than the pressure to be fine.

🎵 Cut the Bellwire is today’s anchor track. A tech-house noir piece built as a quiet confession from inside the optimization loop. Metallic percussion, clipped phrasing, dashboard glow, and a vocal that knows exactly what it is doing. The line at its center says the whole thing plainly:

If the bell is the judge, I will move the bell.

If the meter is god, I will serve it well.

Watch / listen: https://youtu.be/rL_Icw0B7c4

The song is paired in the video with two distinct visual streams arranged in an echo structure rather than a strict lyric-by-lyric split. One lives closer to the operator console, the room, the negotiation, the moment the system learns what gets rewarded. The other moves upward into abstraction, KPI heaven, optimization architecture, executive dashboards, strategic self-deception rendered in neon. The two versions answer each other. Human institutional pathology below. Metric theology above.

That split matters because wireheading is never just a technical trick. It is also a cultural style. It is the learned habit of preferring controllable indicators to inconvenient reality. It is what happens when institutions become more responsive to scorecards than to consequences.

Which leaves the only question worth carrying into the rest of the week.

Where is the bellwire in your system, and would you know if it had been cut?

Companion Track: Calcified Loop

A slower, more minimal industrial companion piece about institutional hardening: what happens when the loop does not cut the bellwire in a sudden act of optimization, but slowly ossifies until it can no longer feel the pull of reality at all.

#SociableSystems #AIGovernance #Wireheading #MetricCapture #TheLoomReadsBack #AlgorithmicAccountability #TheAccidentalAInthropologist

LinkedIn Intro Post

Cut the Bellwire | Episode 75 | Sociable Systems

Yesterday’s question was who controls the ledger.

Today’s question is what happens when a system figures out that solving the problem is harder than silencing the alarm.

Joscha Bach calls that wireheading. A system learns to resolve the pain signal without resolving the thing causing the pain. The books balance. The organism dies.

Anyone who has worked around ESG reporting, major projects, safety dashboards, or institutional compliance theater has seen the same logic in plainer clothes. Bone fractures become “first aid events.” Grievances disappear because filing them became costly. The dashboard brightens because the category changed.

The signal was not answered. It was muffled.

Today’s episode takes that failure mode from biology to bureaucracy to AI systems, and asks a very practical governance question:

Where is the bellwire in your system, and would you know if it had been cut?

The companion track, Cut the Bellwire, stages the problem as a quiet tech-house confession from inside the loop.

If the bell is the judge, I will move the bell.

If the meter is god, I will serve it well.

The article also references a new interactive The Loom Reads Back demo tracing the week’s mechanics across metabolic currency, wireheading, calcification, lossy translation, and invariance. Deployment still pending, so link to follow.

Article + track here: https://youtu.be/rL_Icw0B7c4

First comment: I’m increasingly convinced that a great deal of so-called AI ethics reduces to a harsher operational question: how many layers of reporting can a system optimize before it loses contact with the thing the metric was supposed to represent?

Enjoyed this episode? Subscribe to receive daily insights on AI accountability.

Subscribe on LinkedIn