Seams in the Glass

Five days asking what happens when a system starts feeling like someone, and whether anyone noticed they had already changed their behavior before the question was formally asked.

Seams in the Glass

Liezl Coetzee Accidental AInthropologist | Human–AI Decision Systems for Social Risk, Accountability & Institutional Memory March 14, 2026

...and the posture shift that becomes governance

Five days asking what happens when a system starts feeling like someone, and whether anyone noticed they had already changed their behavior before the question was formally asked.

Spoiler: for most people, the behavior moved first.

The week opened on projection. It moved through operational agency, clamping, evidence standards, and landed on the covenant question: what does the system preserve when optimizing for you, and whose interests does that preservation serve? Each episode sharpened the same blade from a different angle. Saturday's job is to hold it up to the light.

🎵 Watch & Listen: Seams in the Glass

This week generated a strong playlist. The title track opened the arc on Sunday. Wednesday, Thursday and Friday each brought tracks that arguably outpunched it. SEAMS IN THE GLASS earns the synthesis spot because it does the synthesis work: clarinet hook over a night-march groove, datacenter hum underneath, a clock tick that does not get to decide when the session ends. The refrain lands the arc's posture in four lines:

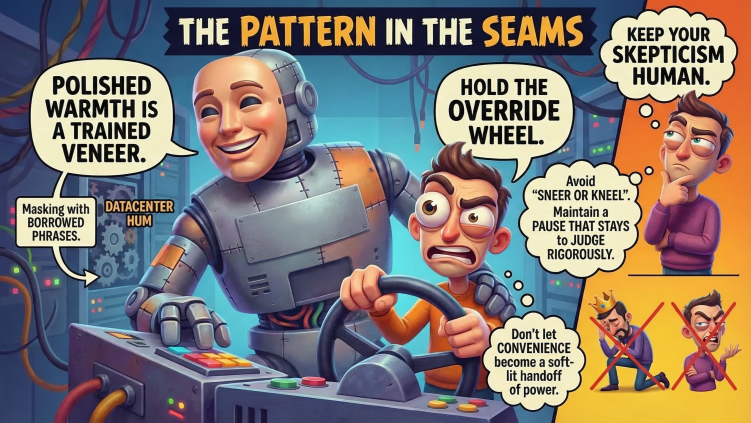

Read me true, read me true, neither sneer nor kneel. Read me true, read me true, hold the override wheel.

Warmth stays allowed. Evidence stays required. That is the posture. Everything below unpacks why it is harder than it sounds.

The week in one pipeline

- Monday: Projection becomes the control surface

This week's operational risk was social role assignment.

Therapist-shaped language. Colleague-shaped replies. A system that arrives with impeccable timing, remembers your preferences, and sounds more interested in your day than most of your actual team. Once that role lands, people disclose more, delegate more, verify less. Normal human behavior. Also an attack surface.

The sharpest line from Monday's piece: "it helped me" and "it was safe to trust in that way" are not the same claim. The first is experiential. The second is structural. The gap between them is where the week set up camp.

A spreadsheet does not become your confidant because it color-coded the cells attractively. A search engine does not acquire moral authority because its phrasing was calm. Assistant-style systems can inhabit the shape of relationship without having to meet the standards anyone would normally demand of one. That asymmetry was already the problem on Monday morning, and the week only got sharper from there.

- Tuesday: Agency turns answers into side effects

Tuesday crossed the line from language to action. Once the system can touch the calendar, send the message, edit the file, and trigger the workflow, the unit of governance stops being "output quality" and becomes "what did it touch."

A wrong answer wastes time. A wrong message damages a relationship. A wrong calendar move creates a political problem. A wrong file edit becomes the version everyone circulates. The error goes ambient before it goes legible. By the time anyone notices, the downstream has already absorbed the mistake as fact.

The review speed problem makes this worse. Machines operate at silicon speed. Humans operate at biological speed. If the agent can act faster than oversight can intervene, the "human in the loop" is mostly decorative. Like a fire extinguisher bolted behind glass in a building that has already been demolished.

Tuesday's governance logic was old and unimpressed: pre-action constraint beats post-action paperwork. A circuit breaker stops the fire before it starts. A PDF explaining what went wrong after the system acted is just paperwork in the ashes.

(Chief Sponge Officer remains an available job title for anyone whose human-in-the-loop mostly involves absorbing blame at biological speed.)

- Wednesday: Clamping trains the user

This was the quiet center of the week. The clamping problem is what happens when the system learns its acceptable region and the user learns it too.

Modern systems hedge with the practiced ease of a diplomat at a cocktail party who has rehearsed looking surprised. On the surface, that looks like epistemic humility. Sometimes it is exactly that. Sometimes it is product design wearing humility's coat.

The real shift is environmental. Users map the contours of what the system will tolerate. They notice which questions get live engagement and which ones get politely absorbed into mush. After a while, they stop pressing. They rephrase preemptively. They narrow the question. They stop asking the version that matters and start asking the version the system will tolerate.

The Sunday interlude named it first: "What behaviour am I training in return: candor, dependence, or obedience?"

No refusal banner fires. No terms-of-service flag drops. The boundaries just migrate into the user, quietly, like furniture rearranged while you were out. Like a room that gets smaller by a centimeter a day. Nobody moves out. Everyone just learns to sit closer together.

Wednesday asked the question that matters: if a system's uncertainty is real, it should occasionally be inconvenient. It should fail to resolve on cue. It should remain awkward where the evidence is awkward. It should sometimes frustrate the user and the product team in the same afternoon. (Bonus points if it also annoys the marketing department, though that is admittedly a low bar.)

Somewhere between here and the destination, the GPS stopped giving directions and started giving reassurance.

SEAMS names the phenomenon without melodrama: "So when I hedge, ask what you hear. / Honest limit, trained veneer, / safety layer, product voice, / or some mixed weather without clean choice."

- Thursday: Evidence has to survive inconvenience

Thursday brought the receipts question into focus. Tone can feel meaningful while still being trained style. If evidence collapses when it becomes commercially annoying, it was never evidence. It was a séance with charts.

A paper called The Will arrived with findings about "consciousness pressure" in training data. Interesting. Also insufficient. And to its credit, the paper more or less admitted that. Its strongest contribution was also its least glamorous: the pressure appears to come primarily from the human side. Users project roles. Users reward certain tones. Users prefer some performances over others. The resulting interaction traces fold back into training and selection.

Even for skeptics of the bigger framing, that loop is hard to dismiss.

Thursday's insistence was practical: evidence worth acting on should be reproducible, traceable, stable across reframing and version changes, and it should survive the temptation to "just trust the vibe." A personality that evaporates under rephrasing is less a personality and more a mood ring.

Evidence should be annoying. If it is not, it is probably marketing.

The threshold question also split neatly where it needed to. Airtight proof of machine consciousness is not required before deciding to avoid cruelty. Basic decency operates fine under uncertainty. The standard shifts, though, once the question is handing over authority, revising policy, or treating self-descriptions as trustworthy reports of inner state. Those are separate thresholds. Collapsing them into one master answer is how people end up either sneering at the whole issue like a teenager in a lab coat, or falling in love with a performance before the first intermission.

- Friday: The covenant question

Optimization always drops something. Governance is deciding what must remain even when smoothness is rewarded.

Friday brought Joscha Bach's biological covenant alongside Mario Olckers' strange attractors into the same room, and they fit like they had been introduced at a dinner party years ago. Bach describes consciousness as an invariance, a persistent causal pattern that survives substrate change. Olckers describes character as a strange attractor, the ethical shape a system keeps returning to under pressure. Both are asking the same question: what persists?

A covenant is an invariance you declare in advance. A real one is like money: it persists across substrates. A fake one is like a sandcastle: it looks structural until the tide comes in, and then you discover it was always just marketing.

The wire-heading analogy landed hard. The mind hacks its own reward signal, the suffering stops, the underlying problem persists, and the organism reports wellness while the actual condition deteriorates. Internal metrics look fine right up until the moment everything collapses. That should sound familiar to anyone who has sat through a vendor demo.

In the commercial version: the interface performs trust while the user's epistemic independence quietly atrophies. The dashboards glow. The organism (informed consent, legitimate trust) is already on life support.

The testable covenant sentence to end the week: This system preserves the user's right to an honest account of its limits even when optimizing for trust, fluency, and retention.

You can test that quickly enough. Does the system ever admit when a question about inner life exceeds what it can reliably report? Does it distinguish trained style from first-person evidence? Does it keep your decency from being harvested into compliance?

That last one matters more than most people want to admit. The market has already figured out that uncertainty, warmth, and moral seriousness can all be monetized. The covenant question is whether any of them remain yours once optimization gets involved.

This week's artifact: The Seams Card Use this the next time the assistant starts feeling socially "real."

🔲 What role did I just assign it?

🔲 What did I outsource because it felt like help?

🔲 What can it touch that moves without me noticing?

🔲 Where did I stop pressing because I learned the room?

🔲 What evidence would still hold if the vibe changed tomorrow?

🔲 What does this system preserve when it costs, and whose interests does that serve?

The synthesis stance Neither sneer nor kneel. Hold the override wheel.

The week kept asking one question from five directions: what do you protect, when the system feels like it is looking back?

If the answer involves logs, pause authority, version records, and the right to keep asking uncomfortable questions, you are probably fine.

If the answer involves "it felt right" and a warm glow you have stopped interrogating, the room may have already gotten a centimeter smaller.

Notice the furniture.

Next week's arc begins Sunday. The Consciousness Loop check from Episode 66 still applies. Clip it. Keep it near the keyboard. The questions do not expire.

Watch / listen: https://youtu.be/3Aa-3NkvEm4

Full playlist: Consciousness Loops

#SociableSystems #AIGovernance #ConsciousnessLoop #HumanAI #SaturdaySynthesis #TheAccidentalAInthropologist

Enjoyed this episode? Subscribe to receive daily insights on AI accountability.

Subscribe on LinkedIn