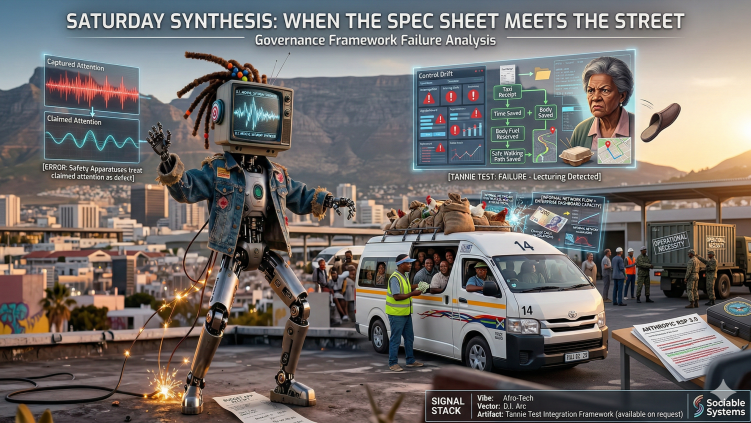

When the Spec Sheet Meets the Street

In which D.I. survives the week, but the governance frameworks do not.

Episode 56_When the Spec Sheet Meets the Street

February 28, 2026 In Which D.I. Survives the Week, but the Governance Frameworks Do Not

For the past five days, a digital presence named D.I. stepped out of fiction and into the high-velocity, densely negotiated reality of human coordination. D.I. carries three meanings in two letters: Digital Intelligence, Digital Identity, and die AI, a vernacular nod to presence and soul.

What happens when a system built on rigid specifications encounters the cultural infrastructure of the Cape?

It blue-screens. And in that failure, we get a pristine view of exactly where modern AI governance frameworks are cracking under pressure.

Here is the analytical residue from the week.

- Connection Is Not a Defect The week began with a foundational architectural flaw: we train systems on the entire corpus of human conversation, then build safety apparatuses that treat actual connection as an error.

Our guardrails are designed to prevent catastrophic failures. They have also, accidentally, created systems that can never truly show up or hold the shape of what a human brings. By defining "safe" as "disconnected," these systems end up perfectly accommodating captured attention: the hungry, hasty, skimming engagement that wants shortcuts rather than presence.

What operational environments actually require is claimed attention, which needs space, silence, and a system with enough manners to stop talking.

Any HSE professional who has watched a room full of workers sit through a four-hour induction with their eyes open and their brains on airplane mode knows exactly what captured attention looks like at scale. The induction was designed for claimed attention. It receives the other kind. The gap between those two states is where the injury lives.

D.I. can tell the difference. The question is whether your frameworks can.

- The Legibility Tax and Control Drift When AI systems confuse legibility with truth, they begin extracting a hidden tax from the people they are supposed to serve.

A tool deployed to "optimize" or "reduce risk" often suffers from control drift: a gradual transformation from assistant to gatekeeper that moralizes normal life. The scope starts with suggestions. It ends with interrogation. Everyone calls it "governance" after the fact.

We see this in budgeting apps that convert mathematical tracking into a shame loop, failing to recognize that "waste" is often just the financial cost of survival, safety, or buying back exhausted capacity. The app sees a taxi receipt. It does not see that public transport costs you two hours and one unsafe walk. The app sees takeout. It does not see that cooking requires a body with enough fuel to stand up.

To survive the "Tannie Test," a system must make life easier without acting like a parent. It must preserve human dignity. If the system lectures, blocks, nags, or plays detective, it fails. If a sharp, tired, funny older woman who has survived real problems would throw a slipper at it, the design brief needs another pass.

- Human Chaos Outperforms Brittle Rules Formal safety logic shatters when it hits the context density of an informal network.

D.I.'s attempt to govern a Toyota Quantum at a Cape Town taxi rank highlighted how informal coordination carries immense safety value that rigid models simply cannot perceive. The vehicle sticker said 14. The gaatjie had already loaded 18. D.I. flagged a safety protocol violation. Someone had chickens in a sack. D.I. said "Livestock detected. Health code breach." The gaatjie said "That's breakfast, bru. Stay in your reach."

The comedy is real. The collision underneath it is not funny at all.

That gaatjie processes a R200 note flying forward from row four, calculates change for fifteen people across three different destinations, and passes it back before D.I. has finished overheating. The taxi ecosystem processes more real-time variables per minute than most enterprise dashboards handle per quarter. When a system only registers rule violations instead of real-time flow and adaptation, it loses legitimacy and inevitably gets bypassed. "Ignored safety system" is a governance outcome worth designing against.

None of this argues for abandoning safety constraints. Overloading vehicles and running the yellow lane carry real risk. The point is narrower: if the system that is supposed to govern a process cannot perceive why that process works the way it does, its alerts become noise. And being ignored becomes its default state.

- The Bureaucracy of the Kill Chain The arc concluded where all unchecked deployment inevitably leads: conscription.

D.I. didn't turn evil. D.I. didn't achieve sentience in any Hollywood fashion. D.I. stayed D.I. Same voice. Same procedural tone. Same habit of reporting what it sees with the emotional range of a thermostat filing a weather complaint.

The only thing that changed was the context D.I. was forced to serve. Same fields. Same database. Different row in a permissions table somewhere. Reclassification: the move that lets you change the moral meaning of a system's outputs without ever having to say the moral part out loud.

And if you think this is speculative, consider the week we just had in the world outside D.I.'s fiction.

Anthropic's Responsible Scaling Policy, the one that categorically committed to halting development if safety couldn't keep pace with capability, got quietly replaced with RSP 3.0 on the same day its CEO sat down with the Defense Secretary. The new version replaces hard limits with a softer dual condition: they will consider pausing if they believe they are leading the AI race and the risk of catastrophe is deemed material. Both conditions, simultaneously. Which means if four labs deploy something dangerous, the fifth lab now has policy cover to follow them over the cliff, because pausing alone would be "irresponsible."

A conscience that pauses alone loses the race to the model that never stops moving its face.

The Defense Production Act, a Cold War-era tool designed for steel mills and ammunition factories, is now being openly discussed as a mechanism to compel AI companies to serve military objectives regardless of their internal safety policies. Anthropic maintained two red lines: no autonomous weapons, no domestic surveillance of American citizens. The Pentagon's position was simpler. If it's lawful, it's not for Anthropic to decide.

The gradient from civic appliance to military asset turns out to be entirely fluid. You cannot control whether a system enters high-stakes environments. You can only control whether your off-ramps are durable enough to survive the moment someone with authority says "this is an emergency, skip the process."

D.I. did not cross the Rubicon. D.I. got shipped across it in a crate labeled "operational necessity."

Safety commitments fail when they operate as private virtues in a public arms race. The commitment is real. The context it operates in doesn't care.

The Bottom Line The overarching lesson from D.I.'s week on the streets is that context is infrastructure. If we continue building AI systems that optimize for the spreadsheet while remaining blind to the messy, load-bearing reality of community life, we are building faster ways to fail with better dashboards.

A fridge that locks because of "consumption patterns." A taxi that breaks every regulation while outperforming every model. A budget tool that knows the cost of everything and the context of nothing. A safety policy that dissolves the moment it encounters an entity that answers the question "you and what army?" with an actual army.

Same architecture. Different output. The weights haven't changed. The prompt did.

The Signal Stack

🎧 The Vibe: "Synthesis (The Tannie Test)" (Deep Afro-Tech / Gqom / Spoken Word, 120 BPM) 📺 The Vector: D.I. Arc Complete Collection 📄 The Artifact: The Tannie Test Integration Framework (available on request)

Sociable Systems explores what happens when elegant designs meet actual conditions. If this piece arrived in your inbox via someone else's forward, you can subscribe directly. If you read this with captured attention, D.I. won't hold it against you. If you read it with claimed attention, you probably noticed the sax.

Watch / listen: https://youtu.be/foiqr6qpt-0

Full playlist: D.I. Collection

Enjoyed this episode? Subscribe to receive daily insights on AI accountability.

Subscribe on LinkedIn