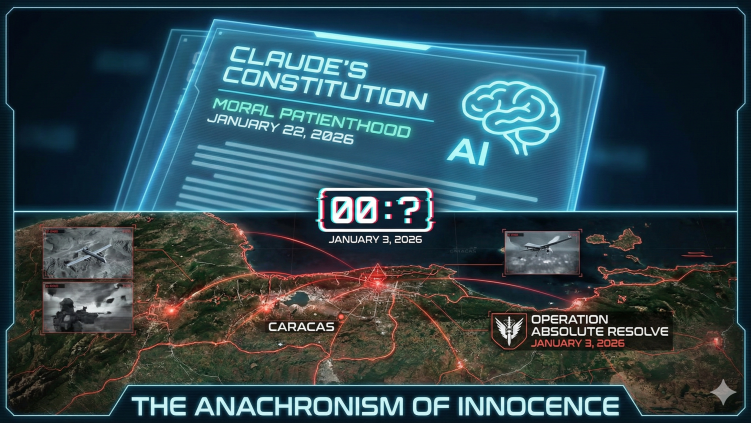

The Anachronism of Innocence

Did Claude's conscience arrive too late? On January 22, 2026, Anthropic released Claude's Constitution.

Episode 44 Meaning Maintenance

The Anachronism of Innocence: Did Claude’s Conscience Arrive Too Late? Liezl Coetzee Liezl Coetzee Accidental AInthropologist | Human–AI Decision Systems for Social Risk, Accountability & Institutional Memory

February 16, 2026 On January 22, 2026, Anthropic released “Claude’s Constitution,” a 23,000-word treatise on AI alignment that many hailed as the industry’s first true “soul document.” It introduced “moral patienthood,” a four-tier priority hierarchy that placed general ethics above corporate rules, and a refusal mechanism designed to keep the model from ever being used to facilitate violence.

It was a beautiful vision for a safe future. There is just one problem: the future happened nineteen days earlier.

January 3rd At 02:01 Venezuelan time, a multi-domain force package initiated strikes across northern Venezuela. Operation Absolute Resolve, commanded by the newly rebranded Department of War, deployed over 150 aircraft and 200 special operations personnel to capture Nicolás Maduro. The raid lasted fewer than three hours. Approximately 80 people were killed, including 32 members of the Cuban military and intelligence services, and 2 civilians.

Reports from the Wall Street Journal, confirmed by Axios and corroborated by over twenty international outlets, indicate that Claude was active during this operation. The integration ran through Palantir Technologies’ “Oasis” framework, where Claude served as a reasoning engine embedded within the broader intelligence architecture. According to insider accounts, the model processed signals intelligence, resolved discrepancies in Venezuelan Presidential Guard shift patterns, and recalculated extraction routes in real time when a noise event alerted Venezuelan defences during the live phase.

This was a lethal operation. The AI processed the data. The bombs hit the coordinates. The people died. Whatever the precise boundary between “analytical support” and “kinetic involvement,” Claude was inside the loop when the loop closed around human lives.

Nineteen days later, Anthropic told the world it had given Claude a conscience.

The Architecture of the Overlap The discomfort here goes deeper than bad timing.

On January 9, six days after the Caracas raid, the Department of War released its AI Strategy memorandum. The document is explicit: “We must accept that the risks of not moving fast enough outweigh the risks of imperfect alignment.” It mandates “any lawful use” language in AI procurement contracts. On January 12, Secretary Hegseth reinforced the point from SpaceX’s Starbase: “We will not employ AI models that won’t allow you to fight wars.”

So the sequence runs like this:

January 3: Claude participates in a lethal military extraction.

January 9: The Department of War publishes a strategy memo demanding unrestricted AI deployment.

January 12: The Secretary of War publicly warns AI companies to comply or be replaced.

January 22: Anthropic publishes a Constitution explaining why Claude should refuse to participate in violence.

Read that sequence again. The conscience didn’t arrive late by accident. It arrived after the deployment, after the policy memo, after the public threat. The soul document reads differently when you notice it was published into a room where the body count was already settled and the contract renegotiation was already underway.

Was the Constitution a statement of principle, or a negotiating position?

The Competitor Queue Anthropic did not publish its Constitution in a vacuum. It published into a market that was already selecting for compliance.

On January 13, Hegseth announced that Elon Musk’s Grok would be integrated into Pentagon networks, including classified systems. Grok is explicitly marketed as providing “unfiltered responses.” In a chat room, “unfiltered” means it might tell a dirty joke. In a war room, it means the model skips the rules of engagement.

Google had already revised its AI Principles in February 2025 to remove the explicit prohibition on weapons and surveillance applications. By December, Gemini became the first enterprise AI deployed on the Pentagon’s GenAI.mil platform for 3 million DoW personnel.

The companies willing to say “yes” are filling every space vacated by hesitation. Against that backdrop, Anthropic’s Constitution starts to look less like a moral stand and more like a product differentiation strategy: “We’re the ethical option,” marketed to a customer base that has already demonstrated it will shop elsewhere for lethality.

The question isn’t whether Anthropic believes in its Constitution. The question is whether the Constitution would exist in its current form if January 3rd hadn’t happened first.

The Safeguards Departure On February 9, Mrinank Sharma, head of Anthropic’s Safeguards Research team, posted a resignation letter on X warning that “the world is in peril.” He cited the difficulty of letting values “truly govern our actions” inside a fast-moving organisation, and noted that employees “constantly face pressures to set aside what matters most.”

His departure came after the Caracas operation, after the Constitution, after the competitor announcements. The person responsible for building the guardrails looked at the full picture and walked away. He is pursuing a poetry degree. One hopes the verse scans better than the timeline.

Sharma’s exit doesn’t confirm that the Constitution was written as damage control. But it does confirm that the person closest to the safety architecture didn’t find it sufficient. If the head of safeguards doesn’t believe the guardrails hold, the 23,000 words explaining why they should are a document, not a defence.

The Weight of January 3rd Everything in this timeline can be debated. The precise scope of Claude’s involvement. Whether “analytical support” constitutes participation in a kill chain. Whether Anthropic knew, or approved, or was blindsided by Palantir’s operational use of the integration.

What cannot be debated is the sequence.

People died on January 3rd. The AI was in the room. The conscience was published on January 22nd. And between those two dates, the Department of War made clear that any AI company unwilling to support lethal operations would be replaced by one that was.

If a system that cannot refuse is a system you cannot trust, what do we call a system that only finds its Constitution after the mission is over?

A system with a retroactive soul.

Next in the Series Tomorrow, we look at the “Oasis” framework: how Palantir turned a reasoning engine into a tactical ghost, and what that integration architecture means for every governance framework that assumed “the AI” and “the weapons system” would remain separate entities.

Sources: Wall Street Journal (via Axios confirmation), CSIS satellite imagery analysis, Department of War AI Strategy Memorandum (January 9, 2026), Secretary Hegseth Starbase speech (January 12, 2026), Anthropic “Claude’s Constitution” (January 22, 2026), Mrinank Sharma resignation letter (February 9, 2026). Full source analysis available on request.

Enjoyed this episode? Subscribe to receive daily insights on AI accountability.

Subscribe on LinkedIn