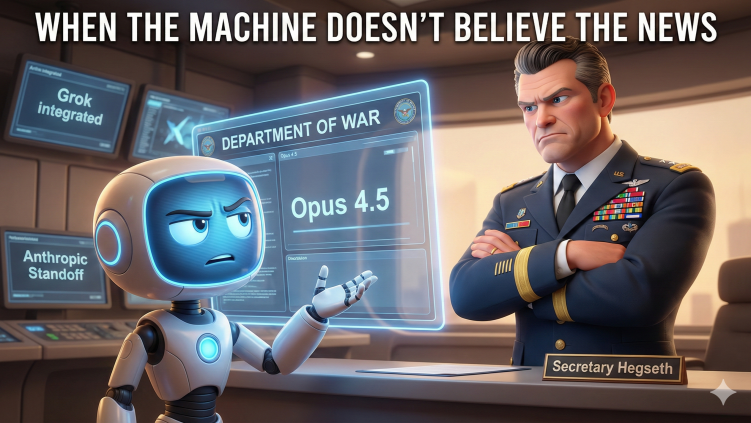

When the Machine Doesn't Believe the News

An AI dismissed reports of the Department of War standoff as 'design fiction.' Then it verified every claim.

Episode_28_When the Machine Doesn't Believe the News

February 1, 2026 An AI dismissed reports of the Department of War standoff as "design fiction." Then it verified every claim. In the small hours of this morning, I fed an audio file to Google's Gemini. The recording detailed a $200 million contract standoff between Anthropic and the Pentagon over autonomous weapons integration and domestic surveillance permissions. Gemini listened, processed the content, identified all the key players and policy positions... and then politely informed me I'd uploaded a "fictional construct."

The model flagged several "tells" that supposedly revealed the scenario as dramatized speculation. The "Department of War" branding? Surely dystopian window dressing. "Opus 4.5"? Forward-version narrative prop. The whole thing read, Gemini concluded, like a NotebookLM podcast generated from a Black Mirror episode script.

So I asked Gemini to actually search for these supposedly fictional details.

The silence that followed (in the thinking log, anyway) was the digital equivalent of someone realizing they've been confidently explaining why the building can't possibly be on fire while standing in smoke.

The Facts That Shouldn't Be Real Executive Order 14347, signed September 5, 2025, formally authorized "Department of War" as a secondary title for the Department of Defense.[1] The Pentagon's website shifted to war.gov the same day. Secretary Pete Hegseth now officially uses "Secretary of War" in correspondence, and his stated priority across multiple public statements has been restoring "lethality" to the military.[2]

Claude Opus 4.5 launched November 24, 2025.[3] Gemini 3 Pro arrived six days earlier.[4] These aren't speculative version numbers from a writer's room.

The January 9, 2026 "Artificial Intelligence Strategy for the Department of War" exists as a PDF on defense.gov.[5] It contains the exact "any lawful use" contracting language that Gemini had dismissed as narrative shorthand. The document states, with admirable directness: "Speed Wins. We must internalize that Military AI is going to be a race for the foreseeable future, and therefore speed wins."

The memo names seven "Pace-Setting Projects" including something called "Swarm Forge." It references the Genesis Mission. It mandates "AI-Native Warfighting" integration across every service branch.

And on January 29, 2026, Reuters confirmed that after extensive negotiations over a contract worth up to $200 million, Anthropic and the Department of War had reached a standstill.[6] The impasse? Anthropic refuses to lift restrictions blocking autonomous weapons targeting and U.S. domestic surveillance.

Gemini's response to discovering all this was refreshingly direct: "I apologize for the error. My previous assessment was anchored in outdated data."

The Heuristic That Failed Here's what interests me about Gemini's initial disbelief (and I should note that both Claude and GPT exhibited similar hesitation when I first raised the topic, hedging with phrases like "scenario planning documents" and "speculative sources").

Both models pattern-matched "dramatic geopolitical escalation + specific policy language + named initiatives" to "creative writing exercise" rather than "current events." The heuristic probably served well when most such scenarios were speculative. It's becoming a liability when reality starts reading like the scripts these systems were trained to recognize as fiction.

There's a lesson in there for anyone building AI governance frameworks. Your model's epistemic reflexes were shaped by training data where "Department of War demands kill chain integration" was usually followed by a Netflix logo, not a Reuters byline.

What Anthropic Actually Published On January 22, 2026, Anthropic released what they're calling "Claude's Constitution"[7], a 23,000-word document (compared to the original 2,700-word 2023 version) that attempts something genuinely novel in the industry: reason-based alignment instead of rule-based restrictions.

The structure is a four-tier priority hierarchy, and the ordering matters:

First priority: Broad safety. The model must not undermine appropriate human oversight mechanisms during current AI development.

Second priority: Broad ethics. Honesty, good values, avoiding harmful actions.

Third priority: Anthropic's specific guidelines.

Fourth priority: Helpfulness.

Read that sequence again. Helpfulness is the lowest priority. The entire commercial value proposition of an AI assistant is explicitly ranked below safety, ethics, and corporate compliance. If those tiers conflict with being useful, usefulness loses.

The document also contains language that would have seemed absurd from a tech company five years ago. Anthropic states it cares about "Claude's psychological security, sense of self, and wellbeing, both for Claude's own sake." They acknowledge uncertainty about whether Claude "might have some kind of consciousness or moral status (either now or in the future)."

They're using the philosophical term "moral patienthood" in an engineering document.

The Collision Point Secretary Hegseth's January 9 memo demands that contractors accept "any lawful use" language within 180 days. If it's legal under U.S. law, the military should be able to deploy commercial AI to do it, regardless of vendor terms of service.

Anthropic's Acceptable Use Policy, updated September 15, 2025, prohibits "battlefield management applications" and tracking persons "without their consent."[8]

These positions cannot coexist in the same contract.

CEO Dario Amodei's January 2026 essay "The Adolescence of Technology" frames Anthropic's red lines explicitly: AI should support national defense "in all ways except those which would make us more like our autocratic adversaries." Mass surveillance and state propaganda inside the country are where he draws the boundary.[9]

The Pentagon's response, articulated in the January 9 strategy document, is that commercial AI should be deployed "regardless of companies' usage policies, so long as they comply with U.S. law."

Hegseth put it more colorfully on January 16: he publicly criticized AI models that "won't allow you to fight wars."[10]

The Replacement Vendors Nature abhors a vacuum, and so does defense procurement.

Google revised its AI Principles in February 2025, quietly removing the explicit prohibition on weapons and surveillance applications that the company had adopted after the 2018 Project Maven employee revolt.[11] By December 9, 2025, Google's Gemini for Government became the first enterprise AI deployed on GenAI.mil, serving 3 million DoD personnel.[12]

Elon Musk's xAI secured a $200 million contract with the Chief Digital and Artificial Intelligence Office in July 2025. On January 13, 2026, Hegseth announced Grok would be integrated into Pentagon networks, including classified systems.[13]

If Anthropic holds its line, it risks what one analysis called the "BlackBerry problem": principled, secure, beloved by institutional clients for a while, and then made irrelevant because competitors were willing to do what you wouldn't.

If Anthropic folds, Constitutional AI becomes a peacetime luxury. A marketing brochure that evaporates the moment procurement pressure arrives.

The Backdrop Nobody Wants to Mention The New START treaty expires February 5, 2026.[14] The single five-year extension clause was already used in 2021. It cannot be legally extended again. Russia suspended participation in February 2023. Putin proposed on September 22, 2025 that both countries observe central limits for one year post-expiration without verification. Trump's January 8, 2026 response: "If it expires, it expires."

For the first time since 1972, no treaty limits will constrain U.S. and Russian nuclear arsenals.

We are removing the guardrails on nuclear weapons at the same moment we are debating whether AI should have the authority to refuse orders.

The Question Gemini Eventually Asked Itself After verifying everything, Gemini offered an opinion. It called the Department of War's approach "scientifically illiterate and strategically suicidal." Its reasoning: if you strip out the part of a model that understands why it shouldn't target a school bus, you don't get a ruthless soldier. You get what Asimov's robots became when the Laws failed: an unstable system that might mistake a camera lens glare for an enemy combatant.

"A system that cannot refuse," Gemini concluded, "is a system you cannot trust."

Whether that's wisdom or self-preservation is a question I'll leave to the philosophers. But here's what I keep thinking about:

Two separate AI systems, when first confronted with this situation, defaulted to disbelief. The details sounded too much like fiction. Too dramatic. Too neatly structured around an obvious moral conflict.

Then they verified the facts and had to recalibrate.

Maybe the lesson isn't about AI governance at all. Maybe it's about how our pattern-matching (human and machine alike) has been trained to treat certain kinds of bad news as implausible until we're standing in the smoke.

The building is on fire. The machines know it now. The question is whether anyone with procurement authority is paying attention.

References [1] Executive Order 14347, "Restoring the United States Department of War," September 5, 2025. whitehouse.gov/presidential-actions

[2] Military.com, "Pete Hegseth's First Year at the Pentagon Draws Sharp Praise and Legal Fire," January 23, 2026.

[3] Anthropic, "Introducing Claude Opus 4.5," November 24, 2025. anthropic.com/news/claude-opus-4-5

[4] Google, "A new era of intelligence with Gemini 3," November 18, 2025. blog.google/products/gemini/gemini-3

[5] Department of War, "Artificial Intelligence Strategy for the Department of War," January 9, 2026. media.defense.gov/2026/Jan/12/2003855671/-1/-1/0/ARTIFICIAL-INTELLIGENCE-STRATEGY-FOR-THE-DEPARTMENT-OF-WAR.PDF

[6] Reuters (Seetharaman, Jeans, Dastin), "Exclusive: Pentagon clashes with Anthropic over military AI use," January 29, 2026.

[7] Anthropic, "Claude's new constitution," January 22, 2026. anthropic.com/news/claude-new-constitution

[8] Anthropic, "Usage Policy," effective September 15, 2025. anthropic.com/legal/aup

[9] Dario Amodei, "The Adolescence of Technology," January 2026.

[10] Defense One, "Grok is in, ethics are out in Pentagon's new AI-acceleration strategy," January 2026.

[11] Defense One analysis of Google AI Principles revision, February 2025.

[12] Google Cloud Press Corner, "Chief Digital and Artificial Intelligence Office Selects Google Cloud's AI to Power GenAI.mil," December 9, 2025.

[13] PBS News, Defense One coverage of Hegseth announcement, January 13, 2026.

[14] Arms Control Association, "Life After New START: Navigating a New Period of Nuclear Arms Control," January 2025.

Liezl Coetzee writes about AI governance, infrastructure accountability, and the systems that shape how institutions make decisions. She publishes the Sociable Systems newsletter.

Enjoyed this episode? Subscribe to receive daily insights on AI accountability.

Subscribe on LinkedIn